SMART_AI_IMAGE_DESCRIBER (PRODUCT)

AI image caption generator using BLIP model to describe images instantly and accurately.

Description

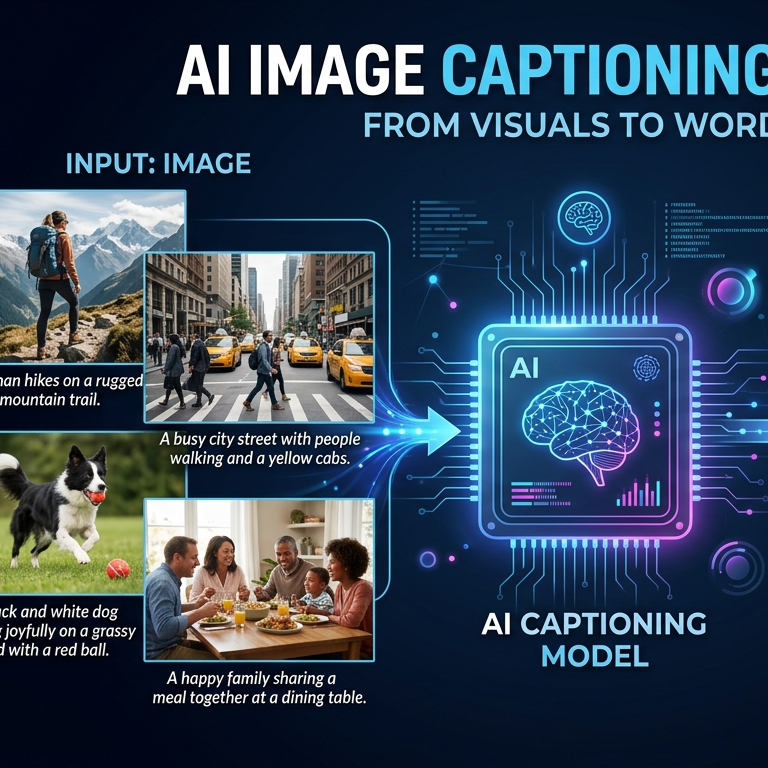

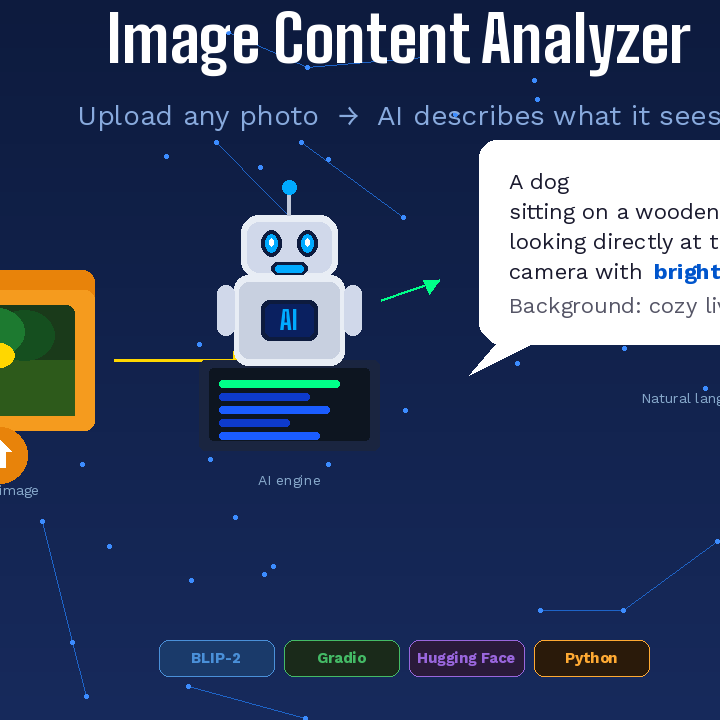

This project is an AI-powered Image Captioning API built using the BLIP (Bootstrapped Language Image Pretraining) model from Salesforce. It takes an image as input and generates a natural language description of the visual content.

The system is built with FastAPI for backend services and uses PyTorch along with Hugging Face Transformers for deep learning inference. When a user uploads an image, the model processes it through a vision encoder and language decoder to understand objects, scenes, and context, then produces a meaningful caption.

This model is lightweight, fast, and suitable for real-world applications such as accessibility tools, content generation, social media automation, and AI-based image understanding systems. It supports both CPU and GPU environments and can be easily deployed on platforms like AI Model Place or any FastAPI-supported server.

Post Your Comments

Login to comment